Operators of large-scale data centers have been steadily increasing the transmission speeds of their Ethernet networks. While many organizations continue to rely upon 10Gbps (10G) technology, with some migrating to 40G, large-scale data centers are rapidly moving to 100G and beyond. Research firm IHS Infonetics has predicted that 100G will make up more than half of data center optical transceiver transmissions by 2019.

Traditionally, data centers have used link aggregation to increase throughput. Multiple 1G or 10G ports in a switch are “bundled” into a single logical connection with the aggregate bandwidth of the individual links. Various link aggregation control protocols (LACPs) are used for load balancing across the connections.

This works pretty well but there are significant drawbacks. First of all, load balancing is tricky. You have to ensure that all of the packets belonging to a particular session are sent across the same link or else the packets may get out of order. This can decrease efficiency and cause problems with some applications.

All of the links in an aggregation group must be the same (all 1G or 10G, for example) and configured the same way. You can only put up to eight links in a group, with many devices restricting you to a smaller number. For best results, the group should include an even number of links. However, even if you set up everything correctly, the load balancing isn’t very efficient.

Link aggregation also ties up a bunch of ports and increases the overall complexity of the switching infrastructure. By implementing 100G, data centers gain operational efficiencies and a more scalable environment to accommodate ever-increasing density and rapid growth.

The availability of more cost-efficient equipment has helped to spur the adoption of 100G. New transceivers have emerged that are less expensive and consume less power than previous generations. And now, complementary metal oxide semiconductor (CMOS) technology is being used for transceivers, enabling even faster transmission speeds while using less power. As a result, the cost-per-port of 100G has come down significantly in recent years, with some 100G solutions costing less per gigabit than comparable 10G and 40G products.

Of course, there’s no sign that network demands will slow down, so 100G is really just a stepping stone toward even faster connections. Hyperscale data center operators and service providers have already implemented 400G connections between their data centers, with 100G connections in the data center core and 25G to individual servers.

It’s only a matter of time before 100G enters the mainstream, so how do you prepare your network? Specifically, how do you build out your cabling infrastructure to facilitate migration to 100G?

With Base-8 MTP cable and modular patch panels from Enconnex, you gain the flexibility you need to futureproof your network. Base-8 has become the preferred choice for optical Ethernet connectivity, with a clear path from 40G to 100G to 400G. The Enconnex modular patch panels have slots that support cassettes with 10G, 40G or 100G ports, making it easy to swap out the port interface without altering the cabling infrastructure. This saves time, money and headaches as well as valuable real estate within your environment.

The move to 100G is inevitable, and now’s the time to plan your migration strategy. Let us show you how modular patch panels from Enconnex provide flexibility, operational efficiency and investment protection.

Rahi is an independently operated subsidiary of Wesco Distribution, Inc. Wesco is a Fortune 200 Company with Annual Revenues of more than USD $19B, 19,000+ Employees, and operates in 50+ countries globally. Rahi was acquired by Wesco in November of 2022. With warehouses and offices in 50+ countries, Rahi offers the advantage of IOR services, local currency billing, and RMA services - helping businesses operate efficiently and successfully at any location. Rahi combines its global reach and in-depth analysis services to understand clients’ business goals, IT requirements, and operations while placing them on the journey toward success.

Do you struggle to offer your remote participants an inclusive experience? Do they often feel neglected and unheard?...

Technology has integrated every aspect of business operations. Even a few seconds of downtime can result in...

The increasing expansion of the Internet of Things (IoT), the rise of cloud computing and software-as-a-service...

Data centers are critical to the digital world, powering everything from social media platforms and streaming services...

In an era where digital transformation is reshaping businesses, the uptime of data center services has become a...

Today, our lives are dependent on technology and the services data centers provide. As more and more businesses...

In a world that is only always “on”, high costs are associated with downtime. According to a Poneman Institute...

With more organizations digitizing their operations, the volume of data that organizations are seeking to manage is...

Data centers are the engines powering the rapidly growing information economy. In today’s digital world, the demand...

In the digital age, the way we communicate and collaborate has changed dramatically. As we’ve shifted towards a...

In the era of digital transformation, Artificial Intelligence (AI) continues to push the boundaries of what is...

In today’s rapidly evolving digital landscape, the way businesses communicate and collaborate has undergone...

In our interconnected world, data centers play a vital role, acting as the backbone of our digital infrastructure. As...

In the universe of coding, even the most seasoned developers sometimes find themselves seeking guidance or hunting for...

As companies embrace the digital age, reliable and efficient network operation has become essential. To that end,...

Technology advancements have forced organizations to adopt technologies into their operations. In the digital age,...

Are your IT personnel able to keep up with the rapidly evolving digital age, maintaining optimal network performance...

Innovations in technology have opened up a host of opportunities for organizations to streamline their operations and...

In today’s technology evolved society, customers expect the same efficiency and speed from small businesses as they...

Having a reliable and secure IT infrastructure is no longer a nice-to-have option for growing businesses. In a digital...

The advancement of technology and the need for digital transformation have led many organizations to adopt a...

A resilient business can be defined as one that can bounce back from any kind of setback with minimal downtime. In...

Technology is no longer a nice-to-have option for businesses. It can be the difference between a successful business...

In today’s digital-driven business landscape, network uptime is no longer just a nice-to-have; it’s a...

A Networks Operation Center (NOC) is the first line of defence against network interruptions, and failures. With most...

In an increasingly digital world, the specter of cyber threats looms large. From large corporations to small...

In the digital age, data centers have become the backbone of businesses, housing critical infrastructure that keeps...

In the digital age, Data Center Storage Solutions are rapidly evolving. The advent of cloud computing has led to a...

The advent of the digital age has brought about an exponential growth in data generation. As businesses transition...

Introduction: Zoom has become an essential tool in today’s digitally driven world, enabling seamless remote...

Introduction: As the world embraces remote work and virtual meetings, Zoom has become an essential tool for...

The digital revolution is upon us. The insatiable demand for data has driven a meteoric rise in the number of data...

As a company specializing in high-performance data center solutions, we at Rahi Systems understand the importance of...

In an era where technology advancements are taking giant leaps forward, staying updated and connected with the latest...

As a technology enthusiast, you are likely always on the hunt for the next ground-breaking innovation, the latest...

Cloud technology is a great indicator of how the world is progressing and how organizations are using the cloud to...

Google Cloud Platform (GCP) is a suite of cloud computing services that offer hosting on the same supporting...

In an increasingly digital age, the importance of data centers in storing, managing, and disseminating data cannot be...

Countless data centers power our digital world supporting billions of digital processes every day. It’s easy to...

Seamless communication and collaboration are driving boardroom efficiency enabling directors to collaborate actively...

The global Data Center Infrastructure Management (DCIM) market size is expected grow at a CAGR of nearly 16% from 2023...

The safety and security of data centers have never been more critical than in the current hyper-connected digital...

As businesses increasingly rely on digital infrastructure to operate, the need for reliable and secure data centers...

The increasing adoption of advanced cloud computing technologies such as AI, IoT, machine learning, and big data has...

Artificial Intelligence (AI) has taken center stage and the massive adoption of AI has disrupted businesses across...

In the digital era, the shift to cloud-based infrastructure has become not just a trend, but a business necessity. A...

As organizations increasingly adopt cloud-based infrastructure, ensuring cloud migration security has become a...

In today’s digital age, businesses are increasingly recognizing the need to modernize their IT infrastructure ...

Hybrid cloud adoption represents the fusion of private and public cloud resources, creating an integrated, flexible,...

Cloud-computing has been a disruptive game-changer for organizations forcing organizations to digitally transform. The...

Clouds have evolved. With the proliferation of big data, clouds have developed from being platforms used for merely...

The digital era is here. Organizations are adopting hybrid multi-cloud systems that offer several advantages such as...

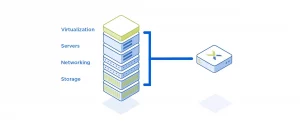

In recent years, Software-Defined Data Centers (SDDC) have gained significant traction as a next-generation data...

The primary benefit of data center cooling units is temperature regulation. Maintaining an optimal temperature range...

Cloud computing has experienced a dramatic acceleration becoming an essential catalyst for businesses looking for...

As organizations increase their dependence on technology, there are a host of new challenges that arise such as...

In today’s digital age, almost every sector relies on private and public cloud computing technologies to operate...

As technology advances, the need for more storage of digital data has grown exponentially. Network-attached storage...

The accumulation of massive data has spiked the need for efficient and cost-effective data management solutions. Data...

In today’s world, cellular networks have become an essential part of our daily lives. However, the cost of using...

As the world becomes increasingly connected, the importance of fast and reliable network connections has grown...

Managed services are an increasingly popular approach to IT infrastructure management and support. As businesses...

A Managed Services Model is a way of outsourcing IT management and support services to a third-party provider. This...

Data centers are integral parts of the modern digital world, providing storage and processing power for a vast array...

As businesses increasingly rely on technology, managing IT operations can be a challenge. A Managed Services Model for...

In today’s rapidly-changing environment, it is paramount for businesses to be swiftly able to connect and...

Blockchain technology has revolutionized the data that would have been difficult to predict a few years ago. What is...

The internet has come a long way since its inception in the early 90s. Beginning from fetching information for users...

Phishing has become a rage in the threat actors’ community to start exploiting people. While the technique...

Data centers play a critical role in the efficient operation of businesses, providing secure and reliable hosting...

In today’s digital age, data centers play a critical role in the efficient operation of businesses. As companies...

Wesco International (NYSE: WCC), a leading provider of business-to-business distribution, logistics services and supply...

Data centers play a crucial role in the efficient operation of businesses, providing secure and reliable hosting...

Small and medium-sized enterprises (SMEs) often face unique challenges when it comes to managing their IT...

Virtualizing your data center can bring many benefits, including reduced costs, improved scalability, and greater...

As the digital world evolves, so does the need for more efficient and cost-effective data management solutions. One...

In today’s digital world, data centers play a crucial role in the efficient operation of businesses. From...

In today’s digital age, businesses are generating an enormous amount of data, which requires secure and reliable...

Data centers are the backbone of the modern digital economy, supporting everything from social media to online...

Rahi, a leading global IT solutions provider, systems integrator and VAR has announced their renewal as foundation...

PITTSBURGH–(BUSINESS WIRE)– Wesco International (NYSE: WCC) today announced it has completed the purchase...

Exclusive agreement gives Rahi customers access to the first UPS Capable of reallocating power draw during peak...

In today’s digital age, data is more valuable than ever before. As such, it is essential that companies take all...

Despite the rise of cloud computing, organizations are still maintaining many applications and services on-premises....

Sushil Goyal, Co-Founder & COO, Rahi, says,” We are delighted to host our flagship tech event for the first...

Global Data Center “One-Stop” Delivery, Empowers Chinese Enterprises Overseas Shanghai, China – April 27th,...

Global Data Center “One-Stop” Delivery, Empowers Chinese Enterprises Overseas Shanghai, China – April 27th, 2022...

Fremont, Calif. – February 24, 2022 – Rahi, a global IT solutions provider, announced two significant leadership...

Rahi, global IT solutions provider, announces that its subsidiary in China obtained Cisco Premier Integrator...

Singapore – 13.10.2021 – Global IT Infrastructure company, Rahi has today announced a partnership...

Limerick, Ireland — June 22nd, 2021 — Rahi, a global IT infrastructure company, is celebrating its fifth year in...

[Cape Town, SA] Rahi has partnered with Cobalt Iron Inc., a leading provider of SaaS-based enterprise backup and data...

APAC data centres will benefit from EkkoSoft Critical’s ability to consistently unlock 30% data centre cooling...

GLOBAL DIGITAL INFRASTRUCTURE LEADERS ANNOUNCE THE PROMOTION OF DATA CENTER SCHOLARSHIPS AT IT SLIGO 25.03.2020:...

Los Angeles, USA, February 09, 2021 – Utelogy Corporation is adding Rahi to its team of global solutions...

Cape Town, South Africa – 26.01.2021: Rahi, a global IT solutions provider, has announced a $5 million capital...

Fremont, CA – 01.19.2021: Rahi, a global IT systems integrator, has announced details of a global reseller...

[Pune, India, December 10th, 2020] Rahi, India’s leading Global Enterprise & Network Solutions Provider...

The Investment will see the Creation of 50 Jobs Over the Next Three Years. Fremont, CA – December 7, 2020 – Rahi,...

Fremont, CA – November 2020 – Founded in 2012 and with 700+ employees located in over 30 worldwide locations, Rahi...

The new PaaS venture will deliver cost predictability during unpredictable times. Fremont, CA – August 26, 2020 –...

Cloud practices, professional services, and managed services will complement Rahi’s industry-leading expertise in...

Rahi’s data center and networking experts help customers build out lab environments that fully leverage Spirent’s...

Strategic Acquisitions Enable Rahi to Expand Its Capabilities in Network Implementation and Support and Data...

Fremont, Calif. — June 3, 2019 — Rahi Systems announced today its partnership with maincubes, a European data...

Fremont, Calif. — April 30, 2019 — Rahi Systems has been honored with ZPE Systems 2018 Partner of the Year Award....

In today’s digital age, data centers are an integral part of almost every industry. With the growth of big data,...

Certified Data Center Design Program Teaches Best Practices for the Design, Construction and Operation of Data Center...

Data centers have been a critical part of the technological revolution, powering the ever-increasing demand for...

Strategic Acquisitions, New Locations and Enhanced Solutions Enable Rahi to Support Integrated Environments and...

The Open Compute Project (OCP) is an initiative started by Facebook in 2011 to promote open-source hardware designs...

InfoComm 2018, Las Vegas, June 13, 2018 – Rahi Systems has been honored with a Samsung Smart Signage Award for...

In the rapidly evolving world of IT, data is the new oil, and data centers are the refineries. The demand for...

Fremont, Calif. — December 20, 2017 — Rahi Systems announced today its partnership with Nlyte Software, a software...

Fremont, Calif. — November 27, 2017 — Rahi Systems announced today that its Pune, India, branch has achieved ISO...

Prestigious Award Recognizes Rahi’s Rapid Ascent from Startup to Global IT Powerhouse Fremont, Calif. — November...

FlexIT Pod Maximizes Efficiency with Integrated In-Row Cooling System Fremont, Calif. — August 28, 2017 — Rahi...

Efficient Units Deliver Higher Capacity to Address Today’s Heat Loads Fremont, Calif. — August 11, 2017 — Rahi...

RF Shielded Rack Isolates Equipment from Radio Frequency Interference Fremont, Calif. — August 2, 2017 — Rahi...

ServerLIFT Helps IT Personnel Move Equipment Efficiently and Safely Fremont, Calif. — July 17, 2017 — Rahi Systems...

Fremont, Calif. — June 22, 2017 — Rahi Systems announced today a partner agreement with Daxten, a leading...

Fremont, Calif. — May 15, 2017 — Rahi Systems is thrilled to announce the opening of its new office in Istanbul,...

Flexible Solution Gives Customers Greater Operational Agility Fremont, Calif. — January 24, 2017 — Rahi Systems...

Addition of Five Locations to Support Global and Local Partners Fremont, Calif. — December 6, 2016 — Rahi Systems...

Fremont, Calif. — November 10, 2016 — Rahi Systems is pleased to announce the launch of its new website, with a...

Rahi Systems Becomes Authorized Reseller with Motivair’s ChilledDoor® Division Fremont, Calif. — September 19,...

FlexIT R Series Server Delivers Modular Compute and Storage Architecture with multi-generational Intel architecture....

Exclusive agreement gives Rahi customers access to the first UPS Capable of reallocating power draw during peak...

Marcus Doran, Sales Director for Northern Europe Strategic Location Will Serve Customers throughout Northern...

Fremont, California March 24th – Rahi Systems and ServerLIFT Corporation announce their partnership to distribute...

FREMONT, Calif.-Rahi Systems, a Data Center & IT Solutions provider, launched today its FlexIT Pre-Engineered...

FREMONT, Calif. – Rahi Systems, a fast growing and innovative Data Center Solutions Provider, today announced the...

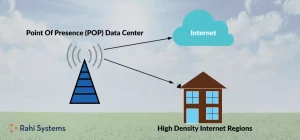

What is a POP Data Center? PoP is the new culture among data centers. The cloud is getting crowded and its result...

Most of us give little thought to electricity — until we get the bill or there’s an interruption in service. But...

Data centers are getting denser, hotter and consuming more power than ever, and that’s driving significant growth in...

Video meetings have become a way of life in a work-from-home, social distancing world, and Zoom has become the video...

Data Center providers are buying real estate at a rapid pace as demand for space continues to skyrocket. Of course,...

Global Data Center “One-Stop” Delivery, Empowers Chinese Enterprises Overseas Shanghai, China – April 27th, 2022...

A system-on-chip (SoC) is an integrated circuit that incorporates all of the components of a computer system. These...

It’s that time of year when IT industry analysts make predictions about the trends that will drive technology...

Data centers consume enormous amounts of electricity. According to a report in Computer World , it takes 34 power...

The Internet of Things (IoT) promises to transform entire industries by creating never-before-imagined efficiencies...

Over time, organizations often adopt multiple security tools to address specific threats. According to the Ponemon...

Fremont, Calif. – February 24, 2022 – Rahi, a global IT solutions provider, announced two significant leadership...

Rahi, global IT solutions provider, announces that its subsidiary in China obtained Cisco Premier Integrator...

Rahi’s presence in Taiwan On December 3rd, 2021, Rahi celebrated its official Taipei office opening in Songshan...

Sydney’s scenic coastal trails with breathtaking ocean views inspire many running enthusiasts. Rahi’s Robert...

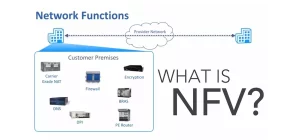

Present-day enterprises are increasingly turning to network virtualization as an alternative to physical devices. This...

Although AI and AR were developed more than a decade ago, they experienced a slow adoption into mainstream technology,...

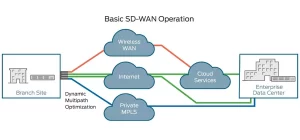

We’ve all heard this saying: “Good, fast or cheap. Pick any two.” With software-defined WAN (SD-WAN), you can...

Work-from-home arrangements are likely to continue, either in a full-time remote work or hybrid model. Many people...

As organizations look at redesigning their office space to support today’s hybrid work model, they should consider...

Before data center infrastructure management (DCIM) solutions entered the market a dozen years ago, IT managers and...

Data centers are experiencing exponential demand for bandwidth driven by massive growth in data. Next-generation...

Cloud computing has been indispensable over the past year and a half, giving organizations access to the...

Organizations are increasingly looking to get out of the data center management business. The ongoing costs and...

Closing the IT Skills Gap with Cloud Managed Services! Cloud computing underpins nearly every major business...

The past year brought a new set of challenges to businesses, including supply chain disruptions, increased operational...

In our last post, we discussed five things that organizations should consider before bringing employees back into the...

As more people are vaccinated, organizations are starting to develop plans for bringing employees back into the...

Security experts estimate that as many as four out of every five data breaches are caused in part by weak or...

Over the past year, organizations have learned that business conditions can change rapidly and on a massive scale....

Importance of Data Center Environmental Monitoring Numerous factors affect the performance and availability of the...

The COVID-19 pandemic and shift to remote work have underscored the importance of embracing digital technologies....

A British Airways data center failure in 2017 caused flight delays, cancellations and lost luggage for 75,000...

Digital Signage Is Now a Mission-Critical Component of the IT Infrastructure. Over the past year, most IT strategy...

The COVID-19 pandemic has caused organizations of all sizes to accelerate their digital transformation initiatives....

In today’s fast-paced and ever-evolving world, the workplace environment is becoming increasingly unpredictable....

Before the pandemic, applications such as Zoom, Microsoft Teams, and other virtual collaboration tools were not...

Adoption of software-defined WAN (SD-WAN) slowed somewhat in 2020 due to economic concerns. However, Dell’Oro Group...

When the cloud started taking off, industry analysts predicted that IT teams would become “brokers” of technology...

Why Enterprise Video Conferencing Is Best Left to Professionals Video conferencing has been a lifeline for employees...

As data volumes continue to increase, data storage systems have become a mission-critical component of enterprise IT...

The odds that your organization will suffer a cyberattack are extremely high. In a recent Ponemon Institute study, 66...

In 2012, we founded Rahi Systems with a vision of becoming a trusted source for the global delivery of IT solutions...

Subscription Model for On-Premise Infrastructure The cloud has revolutionized the way IT resources are provisioned and...

Managed services have been popular among organizations of all sizes for many years. In essence, the managed services...

Anytime there’s a crisis, hackers take advantage of the opportunity to attack systems, spread malware, and dupe users...

Poor quality audio and video conference calls and digital meetings beset by technical issues are a huge productivity...

Almost all businesses today are deeply dependent on information technology, which is why studies consistently find that...

Cloud services and applications have played a central role in enabling organizations to remain operational during the...

End-users aren’t the only ones working from home. Many IT staff are also doing their jobs remotely, making it...

The operational changes brought on by the COVID-19 crisis are likely to have a long-term impact on the IT...

Over the past several years, the role of IT has been slowly transitioning from managing technology to enabling business...

Will the COVID-19 pandemic finally bring virtual desktop infrastructure (VDI) into the mainstream? It certainly looks...

Dear Rahi Team, The past week in the US has been witness to an ongoing debate about better inclusivity and the racism...

Fun Fact: At a recent networking conference we attended, a poll was taken and it was discovered that more people in...

The COVID-19 pandemic is driving increased interest in virtualization as a means of remotely managing servers. Many...

Much of the discussion around work-from-home technology has focused on network connectivity and security. Those things...

The Right Collaboration Tools for Remote Workers Various studies have shown that knowledge workers, managers, and...

When your business is global, there are international rules and regulations that you have to play by. To streamline...

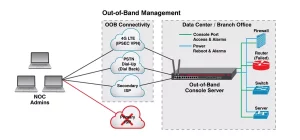

Out-of-Band Management (OOBM) Solutions For Data Centers The high cost of downtime is well documented. According to...

Cybercriminals are preying on fears of the COVID-19 coronavirus pandemic to spread malware, perpetrate scams, and...

The data center has undergone a number of major changes over the years, from mainframes to client/server computing to...

When we think about IT complexity, we often visualize stacks of hardware appliances. But there’s growing complexity...

Any organization with multiple branch offices needs connectivity between those locations. Traditionally, organizations...

The Coronavirus has not officially reached global pandemic status, but organizations should nevertheless be prepared...

Over the past decade, many organisations have deployed Data Centre Infrastructure Management (DCIM) platforms. This is...

Forrester Research estimates that about 20 percent of enterprise workloads now run in the public cloud. A survey by...

Networking has always been a hardware-centric affair. Organizations purchase, implement and maintain a wide array of...

Ming Gao is a system engineer based in Shanghai. Before finishing his degree in the university, Ming knew exactly where...

To celebrate National Technology Day, our Rahi Engineers are reflecting on some of the major technical advances in...

A “spare” can be defined as any asset that is not in production, but that is available to use when needed. Its...

5G is a complex hybrid of different radio frequency (RF) technologies connected to an ultrafast, managed network...

When organizations are looking to design and implement an A/V system, they seldom consider the long-term maintenance...

What exactly do we mean by “workplace productivity”? It’s not the same thing as efficiency, which is simply the...

A passion for computers from an early age and an inquisitive mind that pondered the workings of machines inspired Rory...

Every organization seeks to derive maximum value from its data, whether it’s to enhance the customer experience,...

Amazon Web Services introduced the AWS Transit Gateway service in November 2018 to enable customers to connect multiple...

Not that long ago, audio and video components and IT systems were distinct elements of the corporate environment that...

Organizations continue to prioritize aisle containment as a means of controlling energy costs and protecting data...

Even in today’s threat climate, many organizations lack the skill sets, processes and tools they need to protect...

The Internet of Things (IoT) is already transforming entire industries and bringing an array of benefits to...

Hyper-converged infrastructure (HCI) solutions have been around for a number of years and have seen broad adoption in...

The term “structured cabling” conjures up images of a neatly installed cabling plant with everything properly...

The firewall remains an essential component of a layered approach to cybersecurity. However, routing network...

Video conferencing used to require expensive hardware that was installed only in the largest conference rooms and...

Today, many organizations opt for an open office environment — eliminating walls and cubicles in order to break down...

Working with A/V professionals to design your meeting space offers a number of benefits. People who specialize in A/V...

Apple devices are very popular among consumers — devout users have been known to camp outside Apple stores when a new...

Many organizations have adopted a cloud-first IT strategy to improve the way they do business. Public, private and...

The IT skills gap has plagued organizations for a number of years, and continues to increase. Gartner has reported...

In World War II, shortages of raw materials and components often forced manufacturers to look for substitutes. A couple...

Software-defined application delivery controllers provide key benefits In our last post, we discussed how growing...

Half of all IT workloads still run in enterprise data centers and will continue to do so through at least 2021,...

There are a number of reasons why an organization might need to relocate data center equipment. As IT demands increase...

We’ve been talking about some of the networking issues that can impact A/V systems. First, we took a look at some of...

In our last post, we explained why audio video solutions requirements should be considered when designing the network...

Video conferencing, digital signage and other A/V components are an increasingly critical part of the IT environment....

Maybe you’ve just completed a data center buildout and moved all of your racks, cabinets and IT equipment into the...

The commercial A/V industry is growing at a fantastic rate. Products are becoming more affordable, which puts...

Data storage volumes continue to grow exponentially, creating challenges for organizations of all sizes. Smaller...

If you were alive in the 1970s, you probably remember the videotape “format war” between Sony’s Betamax and...

Wireless Local Area Networks (WLANs) have become the key piece of any enterprise’s core infrastructure and network...

In many organizations, A/V equipment is becoming as mission-critical as IT equipment. These organizations are using...

For years, organizations have been using hot-aisle/cold-aisle configurations to manage airflow in the data center,...

Large enterprise level businesses, or smaller businesses that are beginning to expand, face major problems of...

When designing a boardroom, conference room or training facility, organizations tend to focus on the video component....

The structured cabling market is expected to grow from $11 billion in 2018 to $25 billion by 2025, according to newly...

When it comes to building out a data center, it’s important to partner with a solution provider who has expertise in...

Mobile and cloud technologies may not have entirely erased the network perimeter, but they’ve certainly punched a...

In many industries, a professional certification or license shows that you have the minimal educational background and...

Video walls were once exclusively used by high-end retailers, stadiums and entertainment venues. Today, organizations...

The right collaboration technology can deliver real business benefits by improving communication, streamlining...

Despite repeated predictions of its impending demise, tape storage remains a remarkably healthy technology. In fact,...

Even with increased awareness of the cost of downtime, the majority of North American businesses aren’t ready to deal...

Organizational value can be derived from technology investments through cost reduction, improved productivity, enhanced...

Once limited to science fiction movies and conversations about what could be, artificial intelligence (AI) now has use...

With so much emphasis placed on securing data across the network, endpoints and the cloud, it’s easy to overlook the...

Autonomous vehicles may be driving on Texas roads soon. The Texas Department of Transportation just announced its plan...

When we started doing commercial A/V in the early 2000s, professional A/V was not focused on the user so much as making...

Digital transformation continues to be a top business initiative in 2019. At the same time, organizations are looking...

One of the biggest challenges faced by IT managers is how to keep up with the rate of technology change. As hardware...

A well-designed, high-tech board room serves as a showcase to impress customers and investors. A large, well-outfitted...

What will the data center look like in 2019 and beyond? That’s one of the most pressing questions facing data center...

IT asset management has never been easy, and it becomes exponentially more difficult as data centers increase in...

Massive amounts of data travel around the world at the speed of light, so it’s easy to underestimate the complexities...

IDC has predicted the avalanche of data will reach 44 zettabytes by 2020 and 163 zettabytes by 2025. As data volumes...

The campus network, both wired and wireless, has never been more important. Users rely on the campus network for ready...

You would think that plugging a device into an electrical outlet would be the simplest operation in the data center....

Employees today are increasingly likely to work from outside the office, employing a variety of endpoint devices to...

In a previous post, we talked about the evolution of A/V technology from isolated, standalone systems to networked...

Whenever you go to a fast-casual dining establishment, coffee shop, deli or a movie theatre concession stand, you see...

On Oct. 18, more than 55 million people worldwide will participate in the Great ShakeOut, a drill designed to help...

A U.K.-based bank recently discovered the risks and challenges associated with data migration. When it was acquired by...

Video conferencing has become an indelible part of the enterprise landscape. Organizations are implementing video...

The traditional IT environment is built using best-of-breed components to support monolithic applications. Distinct...

Digital signage is great for engaging customers and boosting sales. It allows you to deliver custom video, images and...

As organizations implement more powerful systems for data analytics, artificial intelligence (AI) and other...

Most organizations could be more productive. Many business processes and workflows have bottlenecks that impede the...

Strategic Acquisitions, New Locations and Enhanced Solutions Enable Rahi to Support Integrated Environments and...

It’s the start of the 2018 college football season, and student athletes across the country are working hard to...

In our last post, we discussed the top five benefits of a digital signage solution. Digital signage can reduce the...

Organizations around the world are recognizing the benefits of digital signage as a transformative technology with...

People have been talking about “IT complexity” for years now, but a new survey conducted by Vanson Bourne adds...

In our last post, we discussed why many organizations are building out their own Internet points of presence (POPs)....

Consider these statistics from the Cisco Visual Networking Index Complete Traffic Forecast (2016-2021): Global IP...

Every time a mobile device or Internet of Things (IoT) sensor connects to the network, there’s a real risk of a data...

Scalability is a critical concern in today’s IT environment. Given the accelerating pace of demand for IT services,...

The Internet of Things (IoT) promises to revolutionize entire industries through greater efficiency, enhanced customer...

There’s a concept in building design and construction known as “commissioning.” Most of us understand the word...

When you think of a corporate boardroom, you probably envision a large space dominated by a mahogany table and leather...

Five years ago, end-users may have been willing to wait 10 seconds or so for a video to load. They may have put up...

Rahi’s A/V team is getting ready to head to the Infocomm Conference in Las Vegas June 2-8. This information-packed...

In the beginning was the data center, a centralized place where an organization’s IT assets were implemented and...

Data center operators are always looking for ways to save money and improve efficiency. Having the right tools can...

Energy costs eat up 25 percent to 60 percent of a data center’s operating expenses. For large facilities, that can...

The Rahi team is wrapping up International Telecoms Week at the Hyatt Regency and Swissôtel in Chicago. It’s been...

Microsoft recently announced plans to open its first data centers in the Middle East in 2019. Amazon will open its...

Storage capacity planning has always been challenging, and the difficulty has increased along with escalating data...

What is GDPR? How Does It Impacts Data Center Decision Making? Organizations around the world are focused on May 25th,...

Ever-increasing IT demands are driving data center modernization initiatives that leverage private cloud and...

Many organizations will face the need to relocate their data centers at some point in time. They may find that they are...

The shift from on-premises infrastructure to the cloud continues at a rapid pace. A recent study found that 93 percent...

Rapid advances in artificial intelligence (AI) are being enabled by the graphics processing units (GPUs) Nvidia...

“If you can’t measure it, you can’t manage it,” is an old business school maxim that’s just as applicable to...

Many organizations are opting to move into a colocation facility rather than build out or expand their own data...

High-density data centers are all about efficiency. IT managers look for technology solutions that deliver high levels...

According to the latest data from IDC, the worldwide market for converged infrastructure increased 10.8 percent year...

Data growth continues to accelerate, straining storage infrastructures and pressing organizations to find ways to...

For many years, the rack has been the standard unit of measure for data center resources. We talk about physical space...

Given the ever-increasing business demands for IT services, physical space is at a premium in many data center...

Given the ever-increasing business demands for IT services, physical space is at a premium in many data center...

No one wants to think about a disaster crippling or even destroying their data center. But even as hurricane season...

The Role of Tape Storage in 2021 and Beyond As data volumes continue to grow at a mindboggling rate, many...

Power is a fundamental component of data center operations. A highly reliable power source that maximizes system...

There are a number of reasons why data center densities are increasing. Business demands on IT are growing, and IT...

The cloud and mobile have made applications and data more accessible than ever. At the same time, they’ve made...

In our last post, we discussed the critical importance of digital transformation in today’s hyper-competitive...

The concept of “digital transformation” has gotten a lot of attention in recent years. It involves not just the...

Deploying and maintaining IT infrastructure on a global scale is challenging for even the largest enterprises....

Although organizations are moving more applications and services into the cloud, certain workloads still require the...

We’ve all heard the statistic. Eighty percent of the IT budget goes toward keeping the lights on in the data center....

In Greek mythology, the Hydra was a many-headed serpent that could grow two new heads whenever one was cut...

The fragility of the electric grid was demonstrated once again as Hurricanes Harvey and Irma caused widespread power...

They say that moving is the third most stressful event in life, following death and divorce. If the millions of...

The prefabricated building concept dates back to the early 19th century, when London carpenter Henry Manning developed...

There are a couple of Irish phenomena that have been making headlines lately. One is a loudmouthed mixed martial arts...

Cooling efficiency is a top priority for today’s data center operators. Increased data center densities enable...

Data Center Infrastructure Facebook recently provided an inside look at its mobile device testing lab when it allowed...

There’s nothing new under the sun, or so the saying goes. That’s certainly the case for out-of-band management, a...

In the previous post, we discussed why structured cabling is critical to the Internet of Things (IoT). Organizations...

We could trot out the statistics about the billions of Internet-connected devices, objects, sensors, corporate assets...

In a previous post, we discussed why data center operators are expanding beyond major metropolitan areas to build...

“Location, location, location” has long been the mantra in real estate. The idea is that location is the most...

Staying cool in the summer is at the top of everybody’s list. The same is true for data centers. Data centers have...

How many times have you experienced listener fatigue while listening to your ‘favorite’ playlist on a long trip?...

Do you have a favorite restaurant? What makes you want to always go there? What makes you loyal to them even though...

You remember the saying, ‘Prevention is better than cure?’ That saying is much more relevant, given how almost...

Staying connected is the current way of life. Bandwidth boundaries are blurring and with that, data centers are...

Understanding Data Center Consolidation Given the ever-increasing business demands for IT services, physical space...

The days of owning all the IT products and services within a company, are obsolete. With newer technologies edging out...

Spring cleaning can be hugely satisfying. The same is true while sprucing up your data centers. Data Center Dynamics...

When businesses grow they also need to pay attention to managing their data servers. Owning and operating a data...

The Internet of Things (IoT) has redefined the way we consume infotainment, and how we live our life day-to-day....

Most IT solution providers focus on compute, storage and networking technologies. Rahi Systems is uniquely qualified...

Japan IT Week brought together over 90,000 attendees to discuss the latest’s technologies and software from around...

Overview – In a matter of 60 seconds, today’s Internet handles hundreds of new websites going up, thousands of...

Overview – NTT Communications has established an IoT Office to provide customers with secure IoT solutions that...

As the digital universe continues to expand, data centers are grappling with the constant challenge of keeping their...

If you ask an ordinary man, where the hosting services are done, he is bound to say the US. It is true that most cloud...

“Through this new offer, Schneider Electric is addressing the latency, bandwidth and processing speed challenges...

Power & Cooling trends at top as the top challenges faced by almost all Data Center Operations & Management...

SAN JOSE, Calif.–(BUSINESS WIRE)–ZPE Systems® today unveiled NodeGrid Serial Console™. This new generation of...

Rahi Systems has consistently been above the national average for the % of women employed in the company. Our local...

Mark Twain famously said, “The report of my death has been grossly exaggerated.” The same quote could apply to...

IT process automation is a top priority for senior IT decision-makers, according to a new study conducted by...

Collaboration software became a critical investment for most companies in 2020 due to the need to support...

ResearchAndMarkets.com projects that the global SD-WAN market will see a compound annual growth rate of 31.9...

Deploying and managing IT infrastructure on a box-by-box basis locks IT teams in the role of technology...

Let our experts design, develop, deploy and manage your requirements while you focus on what's important for your business

Please check your inbox for more details.

| Cookie | Duration | Description |

|---|---|---|

| cookielawinfo-checkbox-analytics | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Analytics". |

| cookielawinfo-checkbox-functional | 11 months | The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". |

| cookielawinfo-checkbox-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-others | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Other. |

| cookielawinfo-checkbox-performance | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Performance". |

| viewed_cookie_policy | 11 months | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |